A phone rings, you answer politely with a brief “Hello?”—or in Japan, “Moshi moshi?”—and then comes an unnerving silence. It feels like a wrong number or a prank. But cybersecurity experts warn that even a few seconds of your voice could become raw material for AI-driven fraud. A growing wave of alerts on social media points to “silent calls” as the opening move in voice-cloning scams, where criminals capture tiny snippets of audio to train synthetic voices that can later impersonate you or your loved ones with startling credibility.

What the FTC Warned About

The United States Federal Trade Commission sounded the alarm in 2023, noting that scammers are increasingly using AI to supercharge “family emergency” schemes. In its consumer advisory—“Scammers use AI to enhance their family emergency schemes”—the agency explains how short clips of speech, even those gathered from a brief greeting to a silent caller, can be enough to build a convincing voice clone. The FTC’s guidance is here: https://consumer.ftc.gov/consumer-alerts/2023/03/scammers-use-ai-enhance-their-family-emergency-schemes. While that alert is from last year, the threat is only more relevant today as generative audio tools proliferate and improve.

How a Few Seconds of Your Voice Become a Weapon

Modern AI voice synthesis systems can be trained on surprisingly small samples. Researchers and vendors alike have demonstrated that short utterances—“yes,” “hello,” “hai,” or “moshi moshi”—combined with natural sounds like breathing or a quick acknowledgment can provide enough acoustic data for a convincing clone. If scammers already know your name, workplace, or family ties from social media or data breaches, they can pair those facts with a copied voice to create a powerful illusion. With that, they launch the next phase: a call that seems to come from your boss pressing for an urgent transfer, your trading partner rushing a payment, or your child begging for help. The speed and calm confidence of a “familiar” voice can override skepticism, especially when paired with pressure, confidentiality, or urgency—classic social-engineering triggers.

In the past, many “silent calls” were simply dialer tests or number-verification probes. Today, they can double as data-collection events. Even if you say just one word, that micro-sample has value. And because AI doesn’t need a perfect recording, low-fidelity clips captured over a phone line may still be sufficient to build a passable impersonation—particularly for short, high-stress conversations designed to keep you off balance.

From “Ore Ore” to AI: Japan’s Familiar Threat Evolves

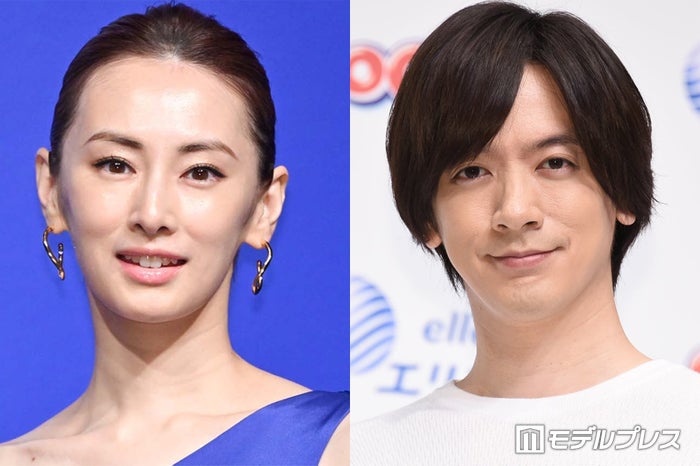

Japan has long battled imposter scams—known locally as “ore ore” and other “special fraud” schemes—targeting families, seniors, and businesses. The AI twist makes these cons harder to spot because the voice on the line can sound eerily right. A scammer pretending to be a grandchild may now also sound like one. Likewise, criminals have been caught using celebrity images or videos in online ads and overlaying them with AI-generated audio to push dubious investments. Japan’s vigilance, rooted in years of community education and proactive policing, is a strength here. But the landscape is shifting, and it demands updated habits and stronger verification across society—at home, in offices, and throughout supply chains.

Japanese consumers benefit from robust telecom and financial ecosystems that already prioritize safety, and mobile carriers continue to expand call-filtering and fraud-detection features. Yet the core challenge is human: voice alone is no longer a reliable proof of identity. That means we must consciously downgrade our trust in audio and rebuild it on verifiable processes.

Red Flags and Immediate Steps for Individuals

- If you see an unfamiliar number, let it roll to voicemail. A legitimate caller can identify themselves and their purpose.

- If you do answer and are met with silence, avoid speaking. End the call promptly.

- If the caller suddenly claims to be a family member in trouble or a known contact—but something feels off—hang up and call back using a number you already have saved, not the one that just called.

- Consider setting a family “safe word” for emergencies and agree in advance never to act on requests for money or sensitive data without it.

- Limit public exposure of your voice where practical—review social media privacy settings, and be cautious about posting voice notes publicly.

- Enable call-screening or spam filters offered by your carrier or device manufacturer, and keep your phone’s software updated.

- Document suspicious calls, block the number, and report incidents to your carrier and local authorities or consumer hotlines.

The Corporate Playbook: Policies That Outpace the Scam

- Mandate multi-channel verification for money movement or sensitive requests. Voice is never enough: require confirmation via secure messaging or email, plus a known callback number.

- Adopt a “no surprise urgency” rule: time-sensitive instructions must follow a pre-established procedure. If those steps aren’t followed, staff are empowered to say no.

- Train teams with realistic scenarios, including AI-voice impersonation of executives, suppliers, and customers. Codify decision trees and document acceptable verification methods.

- Harden vendor management: set shared passphrases or callback protocols with key partners, and keep an updated, independently verified directory of contacts.

- Log and review suspicious calls centrally. Treat incidents as near-misses to improve controls, not as individual mistakes.

- Coordinate with banks and insurers on fraud-response plans so that when seconds matter, you already know what to do and whom to call.

Technology, Policy, and Etiquette: Japan Can Lead

As voice-cloning grows more accessible, the arms race will intensify. Detection tools—such as audio-watermark checks or “liveness” tests that probe for artifacts of synthesis—are promising, but they are not a panacea. Caller-authentication frameworks are advancing worldwide, yet organizational discipline remains decisive. Japan is well placed to lead with a balanced approach: practical consumer guidance, rigorous enterprise standards, and innovation by telecoms, banks, and startups that spot anomalies in real time. Public awareness also matters. We cherish politeness, which makes “moshi moshi” instinctive. The new etiquette is to let voicemail answer unknown calls and to verify by design, not by gut feel. Parents can discuss safe words with children; companies can rehearse escalation paths; communities can share alerts at the neighborhood and workplace level. These simple habits, repeated, form a powerful national firewall.

The Bottom Line

Voice is no longer definitive proof of identity. A silent call might be more than a nuisance; it could be reconnaissance. By refusing to speak to unknown callers, by verifying requests via trusted channels, and by institutionalizing multi-factor checks at home and at work, Japan can turn caution into a competitive advantage. If silence greets you on the line, hang up. Let your processes—not a familiar-sounding voice—make the decision. In the AI era, that pause could be the first and finest barrier to fraud.