Google’s Threat Intelligence Group (GTIG) has published its latest AI Threat Tracker, revealing that since late 2025, global threat actors have moved from experimenting with artificial intelligence to operational deployment across the cyber kill chain. Released on February 13, 2026 (local time), the report draws on Google Cloud analysis and spotlights an alarming rise in attempts to steal the intellectual property of mature AI models—particularly those targeting Google’s Gemini.

What the report says

According to GTIG, “model extraction” attacks—where adversaries craft sequences of prompts to reproduce a model’s inference behavior—have climbed sharply. Over the past year, attackers have intensified efforts to infer models’ internal reasoning processes and chain-of-thought, alongside “distillation” attempts aimed at siphoning sensitive model information. Gemini’s inference capabilities were frequently in the crosshairs, GTIG notes, with activity observed not only among criminal actors but also in testing-like efforts attributed to some private-sector and academic participants.

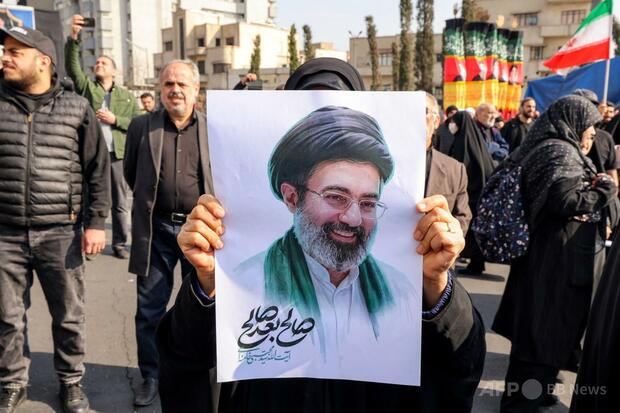

State-linked groups remain active adopters of generative AI. Iran-backed APT42 reportedly leverages AI to discover official email addresses and generate convincing pretexts, refining spear-phishing campaigns. North Korea–linked UNC2970 has used Gemini to synthesize public data on high-value individuals, improving the realism and efficiency of recruiter-impersonation operations.

Malware and phishing go AI-native

GTIG highlights the emergence of malware that delegates functionality to AI services. In late 2025, the malware family dubbed HONESTCUE was observed calling Gemini via API to generate certain behaviors on demand—an approach designed to complicate static analysis and traditional network detection. On the phishing front, AI code-generation tools have shortened build times. A kit called COINBAIT, masquerading as major cryptocurrency exchanges, rapidly stood up polished fake websites to harvest credentials.

In Russian- and English-language underground forums, demand for AI abuse-as-a-service remains high. Many actors, unable to train their own frontier models, increasingly depend on stolen API keys for commercial AI platforms. One service marketed as “Xanthorox” claimed to auto-generate malware code but was found to be illicitly proxying requests to commercial AI APIs.

Why this matters for Japan

Japan’s digitizing economy—spanning advanced manufacturing, finance, and world-class research—relies heavily on AI for productivity and innovation. The reported pivot from experimentation to full-scale operational use of AI by threat actors raises the stakes for Japanese enterprises, universities, and government agencies. Japan’s leadership in responsible AI, from the G7 Hiroshima AI Process to ongoing policy work by METI and the Digital Agency, positions the country to set pragmatic, pro-growth guardrails. But the findings underscore that governance must be matched by day-to-day technical controls, especially as model IP and sensitive training data become prime targets.

Practical steps for organizations in Japan

- Harden model endpoints: throttle and monitor anomalous prompt patterns, enforce strict authentication, and segment high-value inference pipelines.

- Protect keys: rotate and scope API keys, use hardware-backed secrets, and monitor for leakage or unusual spend indicative of abuse.

- Defend data and prompts: minimize exposure of system prompts and proprietary context, apply data loss prevention to inputs/outputs, and restrict retrieval to vetted sources.

- Phishing resilience: pair advanced email filtering with continuous awareness training in both Japanese and English, reflecting tactics seen from APT42 and UNC2970.

- Third-party risk: scrutinize AI-powered tools and kits; verify they do not relay to unauthorized commercial APIs.

The bigger picture

GTIG’s analysis points to a structural shift: AI is now an efficiency multiplier for attackers, powering faster credential theft, more adaptive malware, and targeted social engineering. For Japan, the same technologies—securely deployed—remain a competitive edge. With coordinated action across industry, academia, and government, from rigorous key management to robust model governance, Japan can safeguard its innovation base while continuing to champion trustworthy AI across the Indo-Pacific and beyond.

GTIG concludes that improving model protection and tightening API key management are immediate priorities. For Japan’s globally connected firms and the international community living and working here, the message is clear: the AI era rewards those who pair innovation with disciplined security.